Loosh—a decentralized AI project is now live on the Bittensor network as Subnet 78. Think of this like opening a new public game server, except the “game” is AI performance. People can now join as miners (the ones doing the work) and validators (the ones judging the work). Instead of Loosh running everything privately, the system is now out in the open, where lots of independent operators can compete and improve it.

Bittensor, Explained Like You’re Five

Imagine a big playground with lots of little stations. Each station is a subnet, and each subnet has one job.

Loosh’s subnet 78 is a station where the job is: help an AI system think more clearly, not just spit out words.

Now, here are the two roles:

- Miners are like kids building Lego towers.

- Validators are like judges who check which towers are strongest and best built.

The playground rewards the best builders. That reward is what motivates everyone to do good work instead of sloppy work.

What Loosh Is Actually Trying to Build

Loosh isn’t trying to be “another chatbot.”

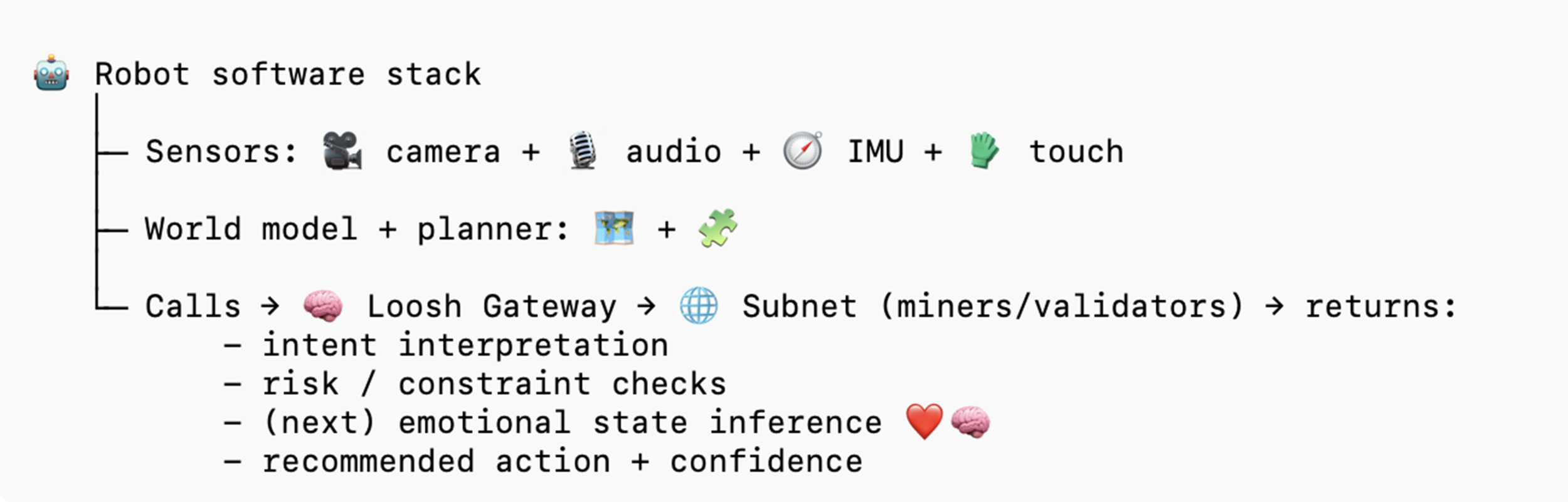

Loosh is trying to build something closer to a thinking layer that robots can plug into.

A simple way to picture it:

- Regular AI: “Here’s an answer.”

- Loosh-style AI: “Here’s an answer, but I also checked rules, tradeoffs, and what could go wrong.”

That matters a lot when the AI isn’t just talking, but moving around the real world, like a robot in a home, a hospital, or a workplace.

Who’s Behind Loosh

Loosh was co-founded by Lisa Cheng and Chris Sorel.

- Lisa Cheng is a longtime builder in blockchain and emerging tech, focused on how systems and incentives shape outcomes. She’s spent more than a decade in crypto and infrastructure work and thinks a lot about governance, controls, and reliability.

- Chris Sorel is the technical co-founder, building the architecture and engineering behind the subnet, with a background of over 20 years in production-grade software systems. He thinks a lot about robotics and is building DIY Robot Dogs for his kids that will be the first integration for Loosh’s cognition engine.

The Next Big Step: Teaching AI to Understand Emotions

Loosh says its team includes a neuroscientist who has built a model using EEG and fMRI data that can identify a person’s emotional state with about 70% accuracy (within the limits of the data and labeling).

The goal is to turn that kind of research into a new subnet capability: emotional inference, meaning the AI can get better at recognizing emotion, not just reading words.

Why that matters:

A robot in your kitchen needs to understand the difference between:

- “I’m fine” (calm)

- “I’m fine” (furious)

- “I’m fine” (scared)

- “I’m fine” (about to cry)

Humans communicate emotion in messy, nonverbal ways. If robots can’t read that, they can easily respond wrong at the worst moment.

What Happens Now That It’s Live on Subnet 78

Now that Loosh is on Bittensor, it has to work in public:

- Miners will run Loosh tasks and generate results.

- Validators will test those results and score them.

- The network will reward good performance and punish weak performance.

So, the launch isn’t just an announcement. It’s a stress test.

If Loosh can attract strong miners and validators and keep the scoring fair, it creates a real improvement loop: better outputs get rewarded, and the system improves over time.

That’s the bet: not “trust us,” but “measure it.”